AI adoption is not a technology problem.

It’s a communication failure.

- trinimaturana

- Tendencias y Futuro, The Voices in English

Índice

Across organisations, the same pattern keeps showing up: AI tools are being deployed. Licenses are approved. Pilots are launched. Training sessions fill up the calendar. Dashboards are built to track usage. And still, something is not working the way leaders expected.

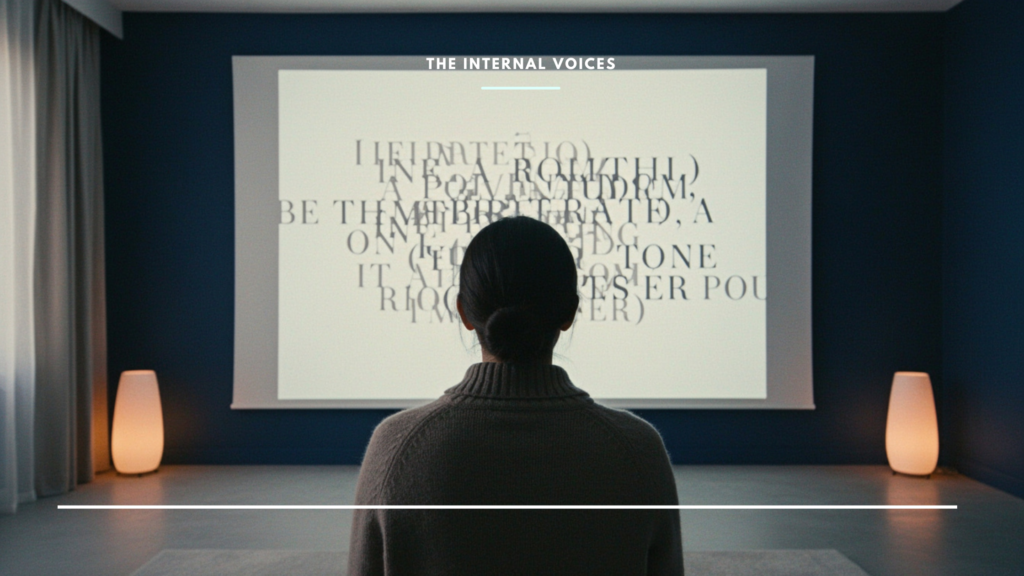

The gap between what is available and what is actually happening is not a technology gap. It is a meaning gap. And it keeps growing.

Access is not the problem. Integration is

Most organisations already have AI available to their people. What they do not have is clarity on where it fits, what it changes, and why it matters for the work people actually do every day.

The data reflects this tension. According to Microsoft’s Work Trend Index, 75% of knowledge workers are already using AI at work. But a significant portion of that usage happens outside official organisational tools. People are not waiting for permission. They are experimenting on their own, in the spaces where the organisation has not yet given them a clear answer.

Access exists. Adoption, in the way organisations expect and need it, does not.

The narrative is missing at the top too

Leaders know AI matters. More than 70% of CEOs expect it to fundamentally reshape their business, according to PwC’s Global CEO Survey. That awareness is genuine. But awareness at the top does not automatically produce clarity further down.

When senior leaders are still figuring out what AI means for the organisation, that uncertainty travels. It shows up in how initiatives are framed. In how priorities shift without explanation. In how people interpret, or misinterpret, what they are being asked to do. Without a clear narrative, AI becomes something sitting alongside the organisation rather than something reshaping how it operates.

When value is unclear, adoption becomes performative

The pressure to show AI is working has never been higher. And yet BCG estimates that only 26% of companies have moved from pilots to scaled implementation. The rest remain in a space that looks like progress from a distance but feels like ambiguity from the inside.

Employees engage just enough to signal participation. Teams test use cases that never fully connect to the work. Leaders mention AI in strategy documents without it meaningfully changing decisions. Adoption becomes visible. Transformation does not.

This is not a failure of willingness. It is a failure of coherence.

People are not resisting AI. They are navigating ambiguity

Employees are already curious. They experiment with external tools. They test ideas on their own time. They integrate AI into their workflows in ways the organisation has not formally sanctioned.

This behaviour is often treated as a governance issue. But it is also a signal.

The willingness is there. What is missing is a clear organisational position on how AI should be used, where it creates real value, and what sits outside acceptable boundaries. Without that, people do not resist. They navigate. And in navigating, they create fragmentation.

Training explains the tool. Communication explains the work

Organisations continue to invest heavily in upskilling. Workshops are delivered. Frameworks are introduced. New capabilities are mapped. And still, adoption struggles.

Because the core question remains unanswered.

What changes in my work because of this?

Training can explain how a tool functions. It cannot define how that tool fits into the system of decisions, expectations, and accountabilities that make up the organisation. That is interpretation. And interpretation is not a technical problem. It is a communication one.

The missing layer

This is where the role of internal communication becomes critical. Not in producing more content about AI. Not in writing better announcements or scheduling more town halls. But in doing what internal communication is actually built for: translating technology into meaning.

Internal communication is the function that connects abstract capability with concrete work. It defines where AI belongs. It creates shared understanding across teams operating in different contexts but expected to move in the same direction. It builds coherence between what is said at the top and what is experienced in practice.

Without that layer, adoption stays fragmented. Some teams move fast. Others wait. Most improvise.

With it, AI becomes part of how the organisation actually works. Not because everyone has the same tool, but because everyone has the same understanding of what they are doing with it.

Until that becomes clear, adoption will continue to look like progress from a distance and feel like ambiguity from the inside.

Because in the end, adoption is not a technical milestone. It is a cultural one.

AI doesn’t fail in organisations. It reveals where understanding was never there to begin with.